* feat: introduce flow_id with timestamp-based report versioning

Replace run_id with flow_id as the primary grouping concept (one flow =

one user analysis intent spanning scan + pipeline + portfolio). Reports

are now written as {timestamp}_{name}.json so load methods always return

the latest version by lexicographic sort, eliminating the latest.json

pointer pattern for new flows.

Key changes:

- report_paths.py: add generate_flow_id(), ts_now() (ms precision),

flow_id kwarg on all path helpers; keep run_id / pointer helpers for

backward compatibility

- ReportStore: dual-mode save/load — flow_id uses timestamped layout,

run_id uses legacy runs/{id}/ layout with latest.json

- MongoReportStore: add flow_id field and index; run_id stays for compat

- DualReportStore: expose flow_id property

- store_factory: accept flow_id as primary param, run_id as alias

- runs.py / langgraph_engine.py: generate and thread flow_id through all

trigger endpoints and run methods

- Tests: add flow_id coverage for all layers; 905 tests pass

Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

* feat: load flow_id in FE to resume runs and fix max_tickers cap on continuation

- Add flow_id to RunParams interface and initial state

- loadRun() now restores flow_id + max_auto_tickers from history so the next

run continues in the same flow directory (Phase 1 scan skipped, already-done

tickers skipped via skip-if-exists logic)

- startRun() spreads flow_id into the request body when set, letting the backend

reuse the existing flow directory instead of generating a fresh flow_id

- After each run, params.flow_id is updated from the response so subsequent

runs automatically continue from the same flow

- max_auto_tickers restored from run.params.max_tickers ensures the ticker cap

matches the original run; scan_tickers[:max_t] on the backend then limits

the Phase 2 queue to the user's setting even when the existing scan has more

Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

* fix(mongo): fast-fail timeout + lazy ensure_indexes to avoid 30s block on fallback

MongoClient previously used pymongo's 30-second serverSelectionTimeoutMS default,

causing store_factory to hang for 30s before falling back to the filesystem when

Atlas is unreachable. Also, ensure_indexes() was called eagerly in __init__,

making every store construction attempt block on a live network call.

- Set serverSelectionTimeoutMS=5_000 so fallback is triggered in ≤5s

- Move ensure_indexes() call out of __init__ — indexes are now created lazily

on the first _save() call via a guarded self._indexes_ensured flag

- ensure_indexes() is still idempotent and safe to call explicitly in tests

Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

* fix(store): wrap all DualReportStore mongo calls in _try_mongo() for graceful degradation

Any MongoDB exception (SSL error, ServerSelectionTimeout, auth failure) was

propagating uncaught through DualReportStore and crashing the run. Reads

would return an error instead of falling back to local, and writes would

abort mid-run without saving anything.

Introduce a single _try_mongo(fn, default) helper that:

- Executes the Mongo callable

- Catches *any* exception, logs it as WARNING with type + message

- Returns the default value so the caller continues with local-only data

Pattern per method:

writes → try mongo (fire-and-forget); always return local result

reads → try mongo first; fall back to local on None or exception

lists → try mongo; fall back to local on empty/None

Runs now complete successfully even when Atlas is unreachable or returns SSL

errors. MongoDB sync resumes automatically once connectivity is restored.

Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

* fix(observability): non-blocking MongoDB inserts + 5s timeout in RunLogger

Every LLM and tool callback called _append() which synchronously called

insert_one() against MongoDB. When Atlas was unreachable this blocked the

entire LangGraph run for pymongo's 30-second default timeout per event,

effectively serializing all agent work behind MongoDB retries.

Two fixes:

1. serverSelectionTimeoutMS=5_000 on the RunLogger's MongoClient — consistent

with the same fix applied to MongoReportStore.

2. MongoDB inserts are now fire-and-forget via daemon threads — _append() spawns

a Thread(target=_insert, daemon=True) and returns immediately. LLM callbacks

and tool events are never delayed by MongoDB connectivity issues.

Failures are still reported via WARNING log from the background thread.

Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

* revert(observability): restore synchronous MongoDB inserts in RunLogger

Root cause was an IP whitelist issue on Atlas causing SSL failures, not

insert volume. The background-thread approach added unnecessary complexity.

The 5s serverSelectionTimeoutMS is retained as a defensive safeguard.

Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

---------

Co-authored-by: Claude Sonnet 4.6 <noreply@anthropic.com>

|

||

|---|---|---|

| .claude | ||

| .vscode | ||

| agent_os | ||

| assets | ||

| cli | ||

| docs | ||

| tests | ||

| tradingagents | ||

| .DS_Store | ||

| .env.example | ||

| .gitignore | ||

| CLAUDE.md | ||

| LICENSE | ||

| PLAN.md | ||

| README.md | ||

| benchmark.py | ||

| benchmark_append.py | ||

| benchmark_csv.py | ||

| benchmark_engine.py | ||

| benchmark_full.py | ||

| benchmark_iteration.py | ||

| benchmark_list.py | ||

| benchmark_v2.py | ||

| benchmark_v3.py | ||

| benchmark_v4.py | ||

| benchmark_v5.py | ||

| main.py | ||

| package-lock.json | ||

| parse_again.py | ||

| parse_issue.py | ||

| pyproject.toml | ||

| requirements.txt | ||

| run_benchmark_6.py | ||

| run_test.py | ||

| test.py | ||

| test2.py | ||

| test_agent_os_connection.py | ||

| test_cache.csv | ||

| test_df.py | ||

| test_opt.py | ||

| test_pd.py | ||

| test_pyarrow.py | ||

README.md

TradingAgents: Multi-Agents LLM Financial Trading Framework

News

- [2026-03] TradingAgents v0.2.2 released with GPT-5.4/Gemini 3.1/Claude 4.6 model coverage, five-tier rating scale, OpenAI Responses API, Anthropic effort control, and cross-platform stability.

- [2026-02] TradingAgents v0.2.0 released with multi-provider LLM support (GPT-5.x, Gemini 3.x, Claude 4.x, Grok 4.x) and improved system architecture.

- [2026-01] Trading-R1 Technical Report released, with Terminal expected to land soon.

🎉 TradingAgents officially released! We have received numerous inquiries about the work, and we would like to express our thanks for the enthusiasm in our community.

So we decided to fully open-source the framework. Looking forward to building impactful projects with you!

🚀 TradingAgents | ⚡ Installation & CLI | 🎬 Demo | 📦 Package Usage | 🤝 Contributing | 📄 Citation

TradingAgents Framework

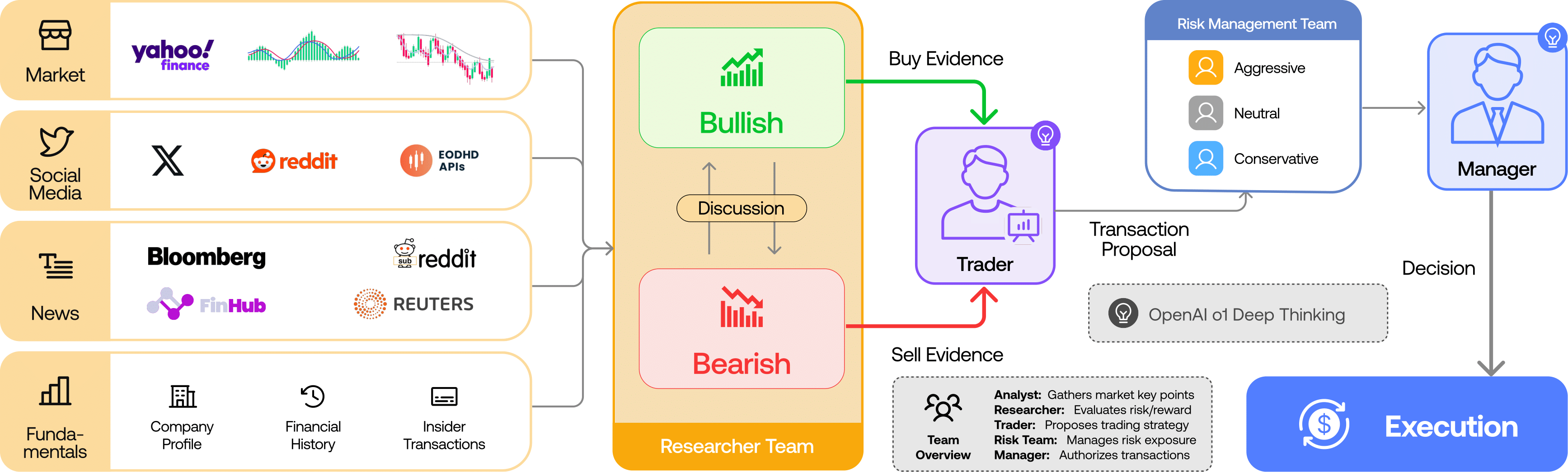

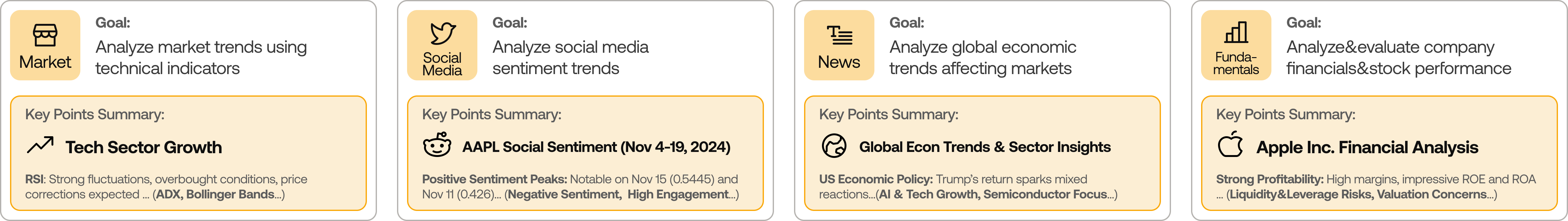

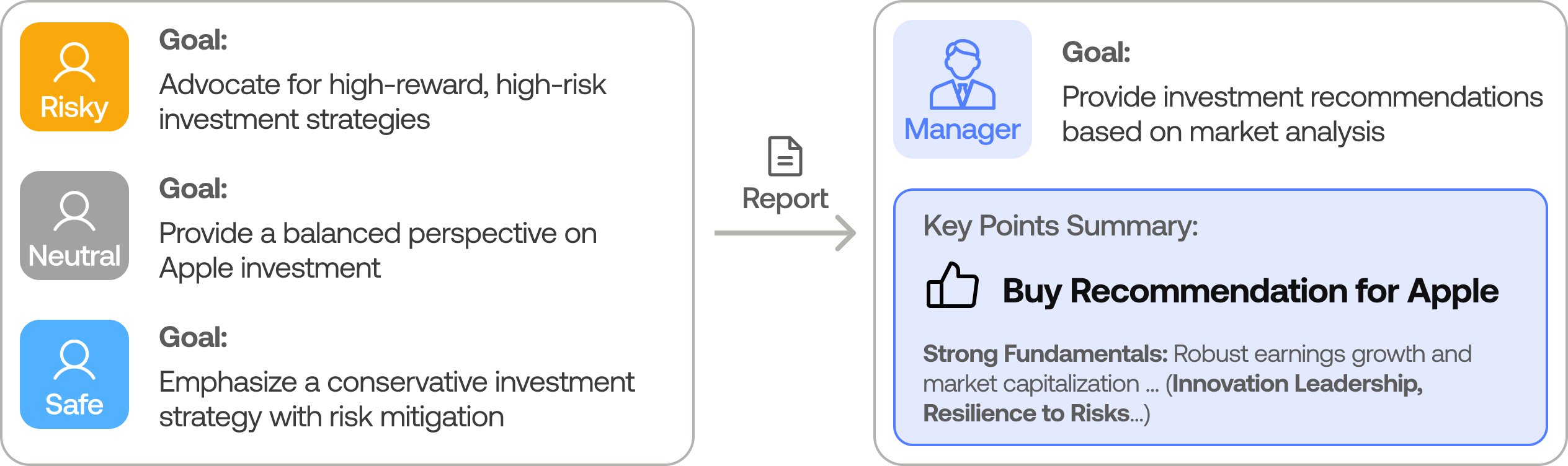

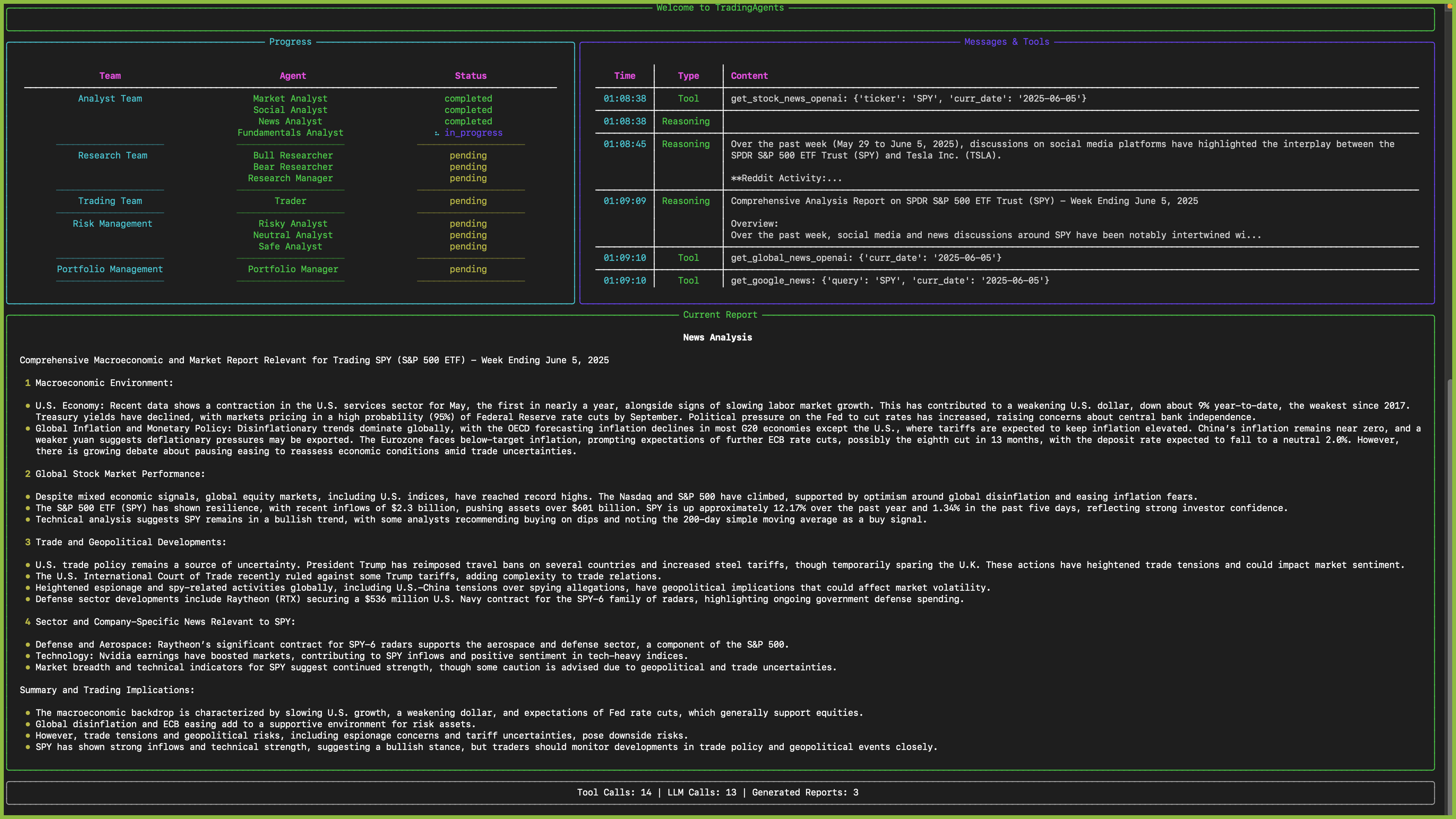

TradingAgents is a multi-agent trading framework that mirrors the dynamics of real-world trading firms. By deploying specialized LLM-powered agents: from fundamental analysts, sentiment experts, and technical analysts, to trader, risk management team, the platform collaboratively evaluates market conditions and informs trading decisions. Moreover, these agents engage in dynamic discussions to pinpoint the optimal strategy.

TradingAgents framework is designed for research purposes. Trading performance may vary based on many factors, including the chosen backbone language models, model temperature, trading periods, the quality of data, and other non-deterministic factors. It is not intended as financial, investment, or trading advice.

Our framework decomposes complex trading tasks into specialized roles. This ensures the system achieves a robust, scalable approach to market analysis and decision-making.

Analyst Team

- Fundamentals Analyst: Evaluates company financials and performance metrics, identifying intrinsic values and potential red flags.

- Sentiment Analyst: Analyzes social media and public sentiment using sentiment scoring algorithms to gauge short-term market mood.

- News Analyst: Monitors global news and macroeconomic indicators, interpreting the impact of events on market conditions.

- Technical Analyst: Utilizes technical indicators (like MACD and RSI) to detect trading patterns and forecast price movements.

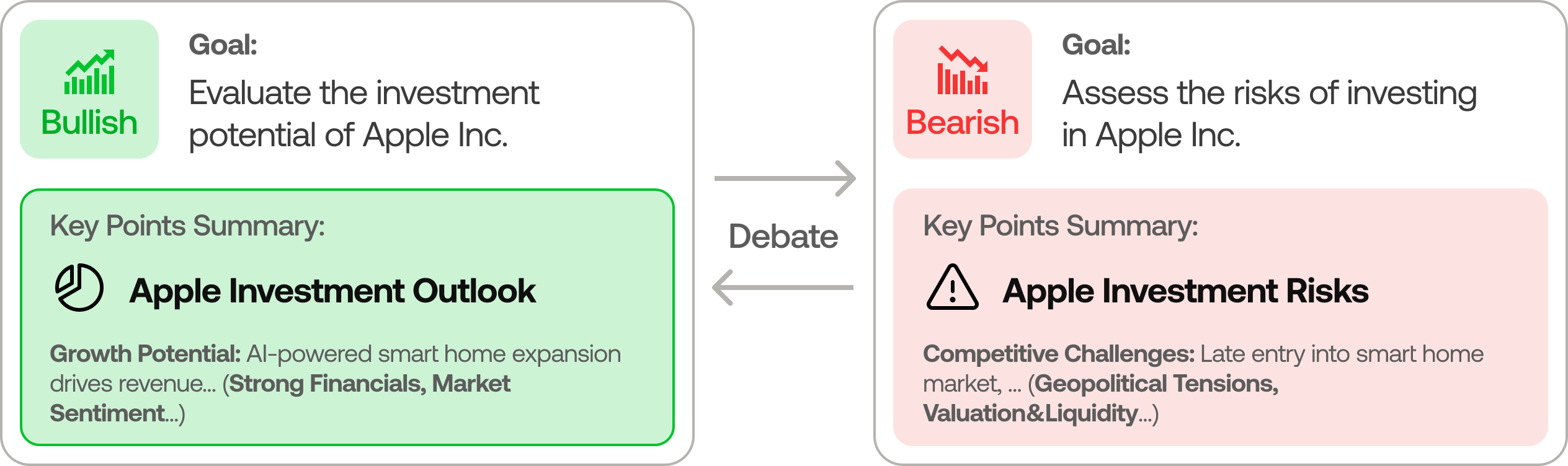

Researcher Team

- Comprises both bullish and bearish researchers who critically assess the insights provided by the Analyst Team. Through structured debates, they balance potential gains against inherent risks.

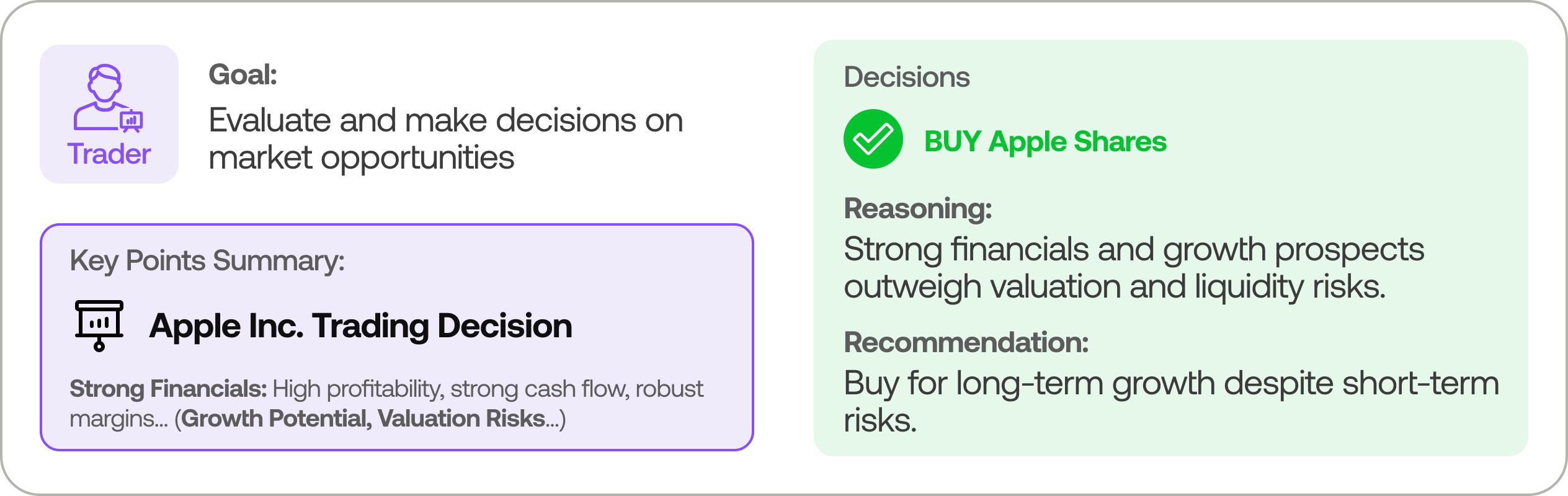

Trader Agent

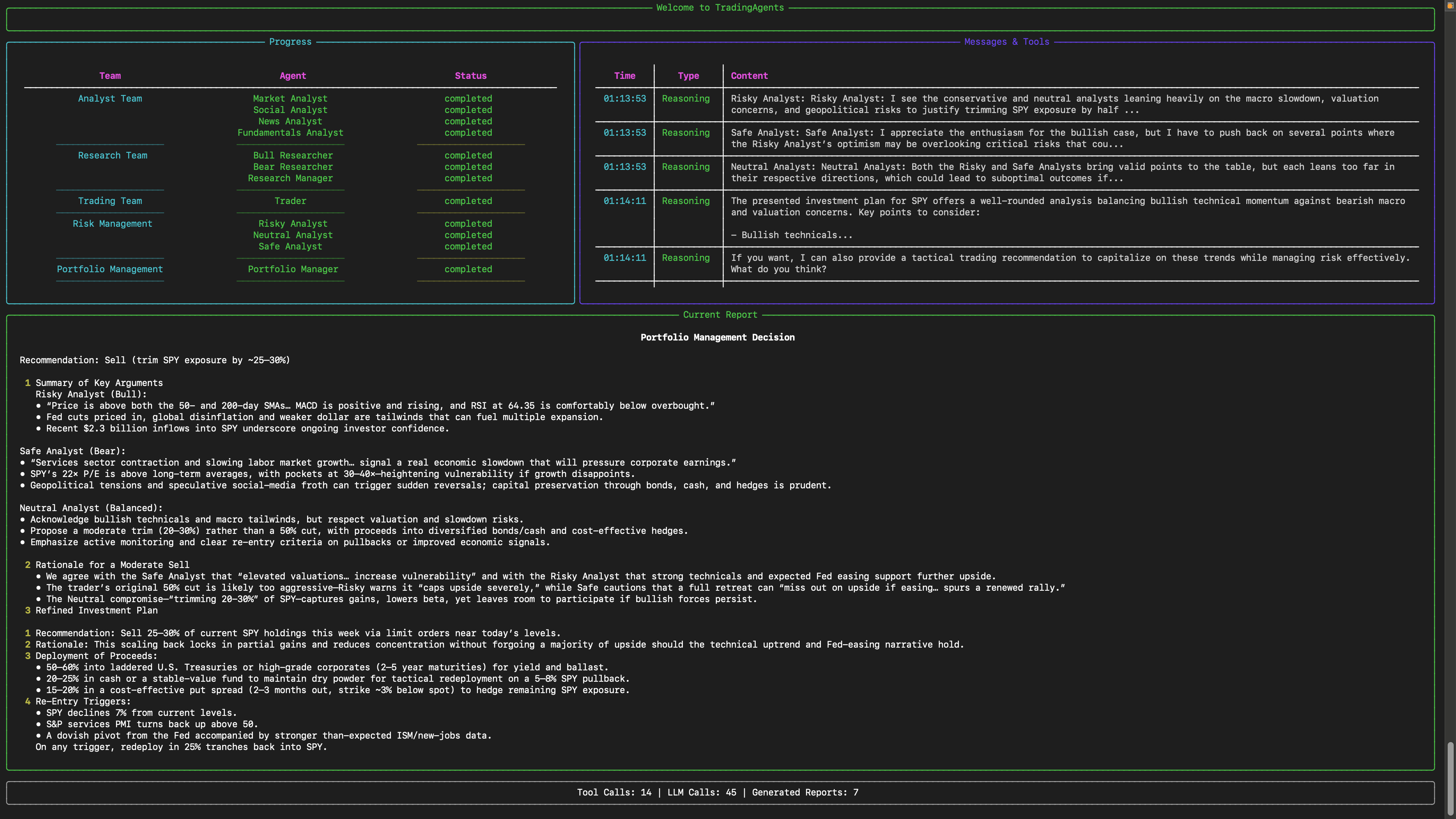

- Composes reports from the analysts and researchers to make informed trading decisions. It determines the timing and magnitude of trades based on comprehensive market insights.

Risk Management and Portfolio Manager

- Continuously evaluates portfolio risk by assessing market volatility, liquidity, and other risk factors. The risk management team evaluates and adjusts trading strategies, providing assessment reports to the Portfolio Manager for final decision.

- The Portfolio Manager approves/rejects the transaction proposal. If approved, the order will be sent to the simulated exchange and executed.

Installation and CLI

Installation

Clone TradingAgents:

git clone https://github.com/TauricResearch/TradingAgents.git

cd TradingAgents

Create a virtual environment in any of your favorite environment managers:

conda create -n tradingagents python=3.13

conda activate tradingagents

Install the package and its dependencies:

pip install .

Required APIs

TradingAgents supports multiple LLM providers. Set the API key for your chosen provider:

export OPENAI_API_KEY=... # OpenAI (GPT)

export GOOGLE_API_KEY=... # Google (Gemini)

export ANTHROPIC_API_KEY=... # Anthropic (Claude)

export XAI_API_KEY=... # xAI (Grok)

export OPENROUTER_API_KEY=... # OpenRouter

export ALPHA_VANTAGE_API_KEY=... # Alpha Vantage

For local models, configure Ollama with llm_provider: "ollama" in your config.

Alternatively, copy .env.example to .env and fill in your keys:

cp .env.example .env

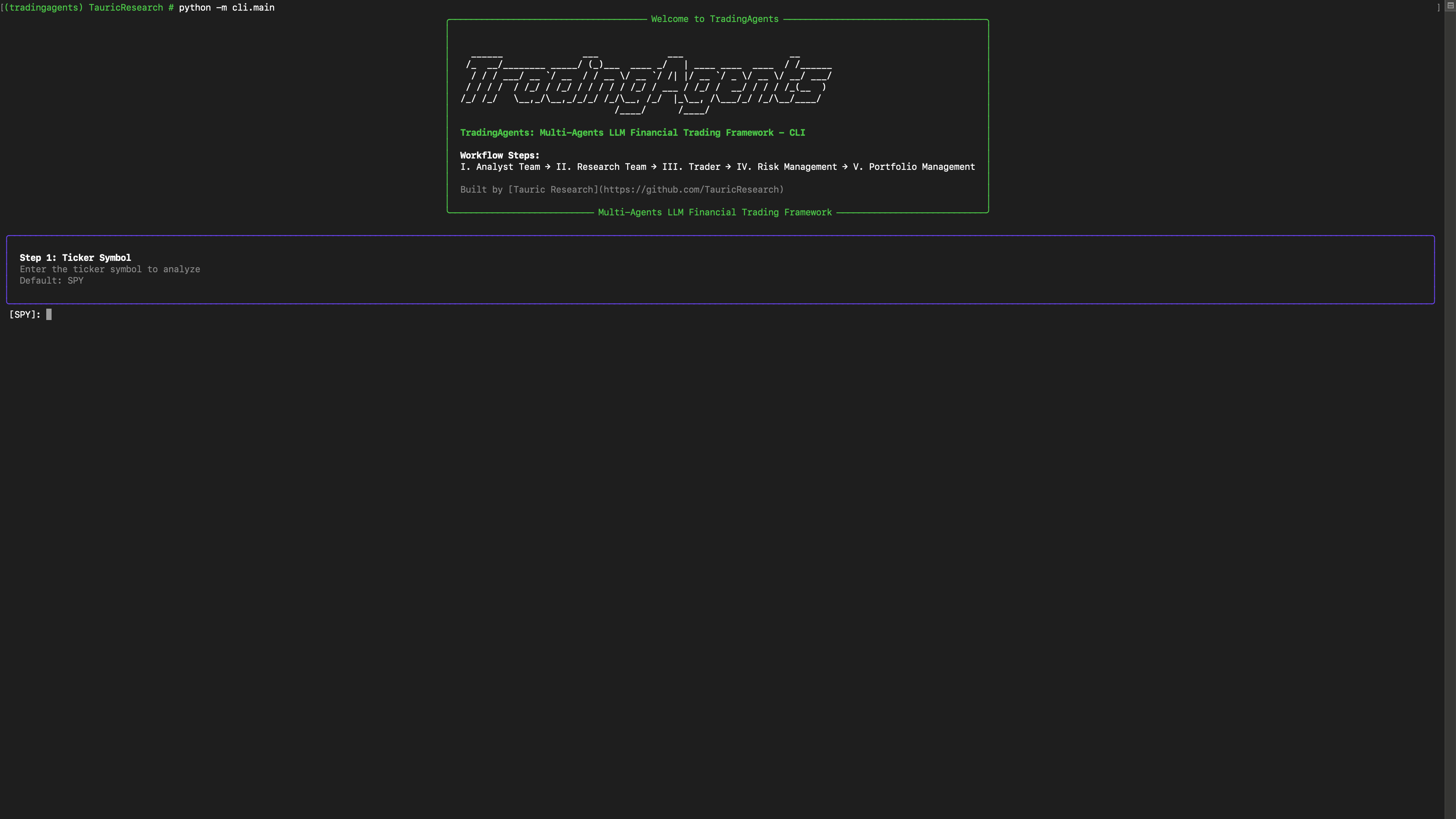

CLI Usage

Launch the interactive CLI:

tradingagents # installed command

python -m cli.main # alternative: run directly from source

You will see a screen where you can select your desired tickers, analysis date, LLM provider, research depth, and more.

An interface will appear showing results as they load, letting you track the agent's progress as it runs.

CLI Commands

| Command | Description |

|---|---|

analyze |

Interactive per-ticker multi-agent analysis (select analysts, LLM, date) |

scan |

Run the 3-phase macro scanner (geopolitical → sector → synthesis) |

pipeline |

Full pipeline: macro scan JSON → filter by conviction → per-ticker deep dive |

portfolio |

Run the Portfolio Manager workflow (requires portfolio ID + scan JSON) |

check-portfolio |

Review current holdings only — no new candidates |

auto |

End-to-end: scan → pipeline → portfolio manager (one command) |

Examples:

# Per-ticker analysis (interactive prompts for ticker, date, LLM, analysts)

python -m cli.main analyze

# Run macro scanner for a specific date

python -m cli.main scan --date 2026-03-21

# Run the full pipeline (scan → filter → per-ticker analysis)

python -m cli.main pipeline

# Run portfolio manager with a specific portfolio and scan results

python -m cli.main portfolio

# Review current holdings without new candidates

python -m cli.main check-portfolio --portfolio-id main_portfolio --date 2026-03-21

# Full autonomous mode: scan → pipeline → portfolio

python -m cli.main auto --portfolio-id main_portfolio --date 2026-03-21

Running Tests

# Install dev dependencies

pip install -e ".[dev]"

# Run all unit tests (integration and e2e excluded by default)

python -m pytest tests/ -v

# Run only portfolio tests

python -m pytest tests/portfolio/ -v

# Run a specific test file

python -m pytest tests/portfolio/test_models.py -v

# Run tests with coverage (requires pytest-cov)

python -m pytest tests/ --cov=tradingagents --cov-report=term-missing

Note: Integration tests that require network access or database connections auto-skip when the relevant environment variables (

SUPABASE_CONNECTION_STRING,FINNHUB_API_KEY, etc.) are not set.

TradingAgents Package

Implementation Details

We built TradingAgents with LangGraph to ensure flexibility and modularity. The framework supports multiple LLM providers: OpenAI, Google, Anthropic, xAI, OpenRouter, and Ollama.

Python Usage

To use TradingAgents inside your code, you can import the tradingagents module and initialize a TradingAgentsGraph() object. The .propagate() function will return a decision. You can run main.py, here's also a quick example:

from tradingagents.graph.trading_graph import TradingAgentsGraph

from tradingagents.default_config import DEFAULT_CONFIG

ta = TradingAgentsGraph(debug=True, config=DEFAULT_CONFIG.copy())

# forward propagate

_, decision = ta.propagate("NVDA", "2026-01-15")

print(decision)

You can also adjust the default configuration to set your own choice of LLMs, debate rounds, etc.

from tradingagents.graph.trading_graph import TradingAgentsGraph

from tradingagents.default_config import DEFAULT_CONFIG

config = DEFAULT_CONFIG.copy()

config["llm_provider"] = "openai" # openai, google, anthropic, xai, openrouter, ollama

config["deep_think_llm"] = "gpt-5.2" # Model for complex reasoning

config["quick_think_llm"] = "gpt-5-mini" # Model for quick tasks

config["max_debate_rounds"] = 2

ta = TradingAgentsGraph(debug=True, config=config)

_, decision = ta.propagate("NVDA", "2026-01-15")

print(decision)

See tradingagents/default_config.py for all configuration options.

Contributing

We welcome contributions from the community! Whether it's fixing a bug, improving documentation, or suggesting a new feature, your input helps make this project better. If you are interested in this line of research, please consider joining our open-source financial AI research community Tauric Research.

Citation

Please reference our work if you find TradingAgents provides you with some help :)

@misc{xiao2025tradingagentsmultiagentsllmfinancial,

title={TradingAgents: Multi-Agents LLM Financial Trading Framework},

author={Yijia Xiao and Edward Sun and Di Luo and Wei Wang},

year={2025},

eprint={2412.20138},

archivePrefix={arXiv},

primaryClass={q-fin.TR},

url={https://arxiv.org/abs/2412.20138},

}